S.C. Vollmer · York University · IEEE VR 2026 DC

Relational Haptic Co-Creation

for Kinaesthetic Creativity in XR ✨🧤

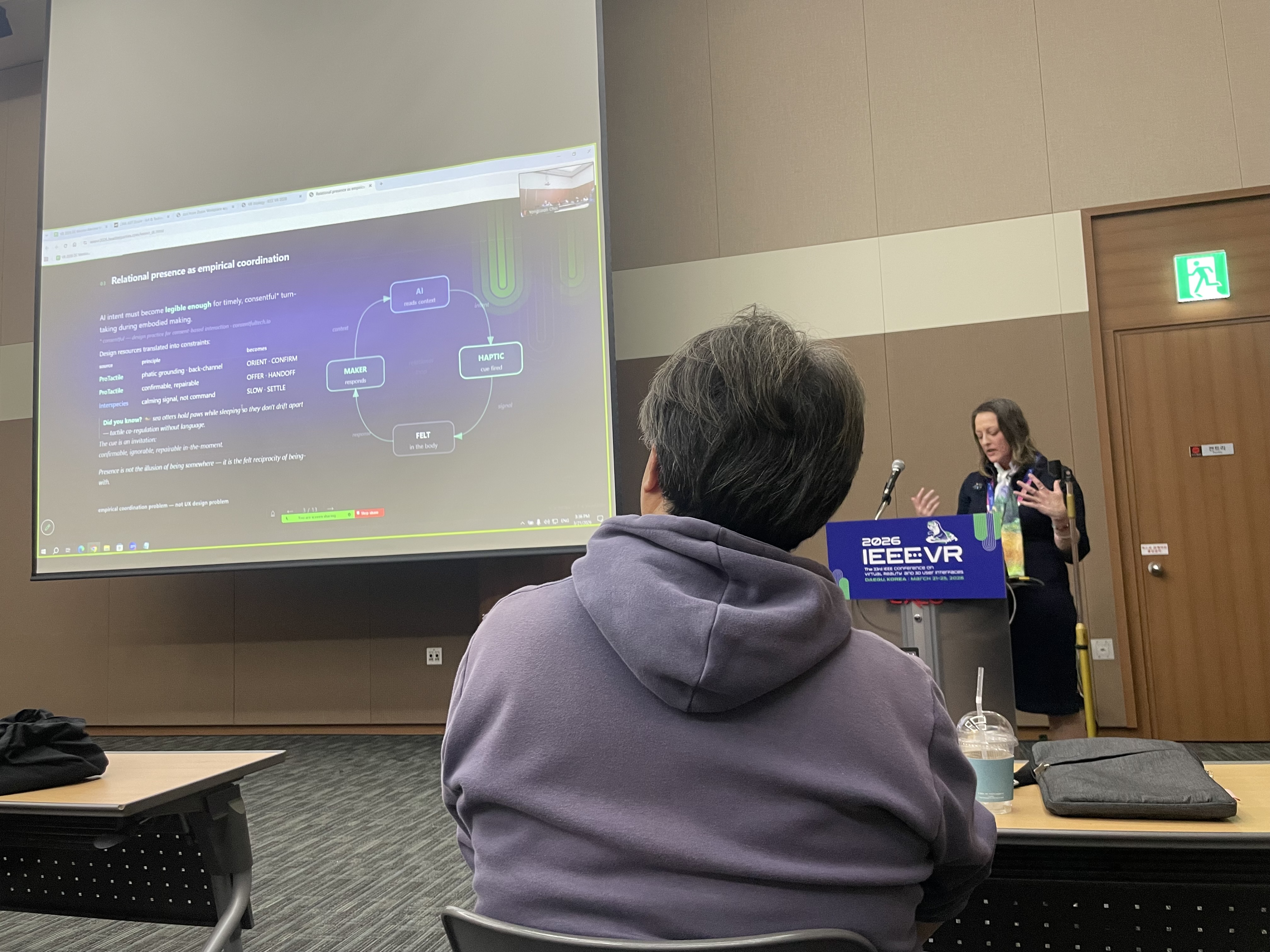

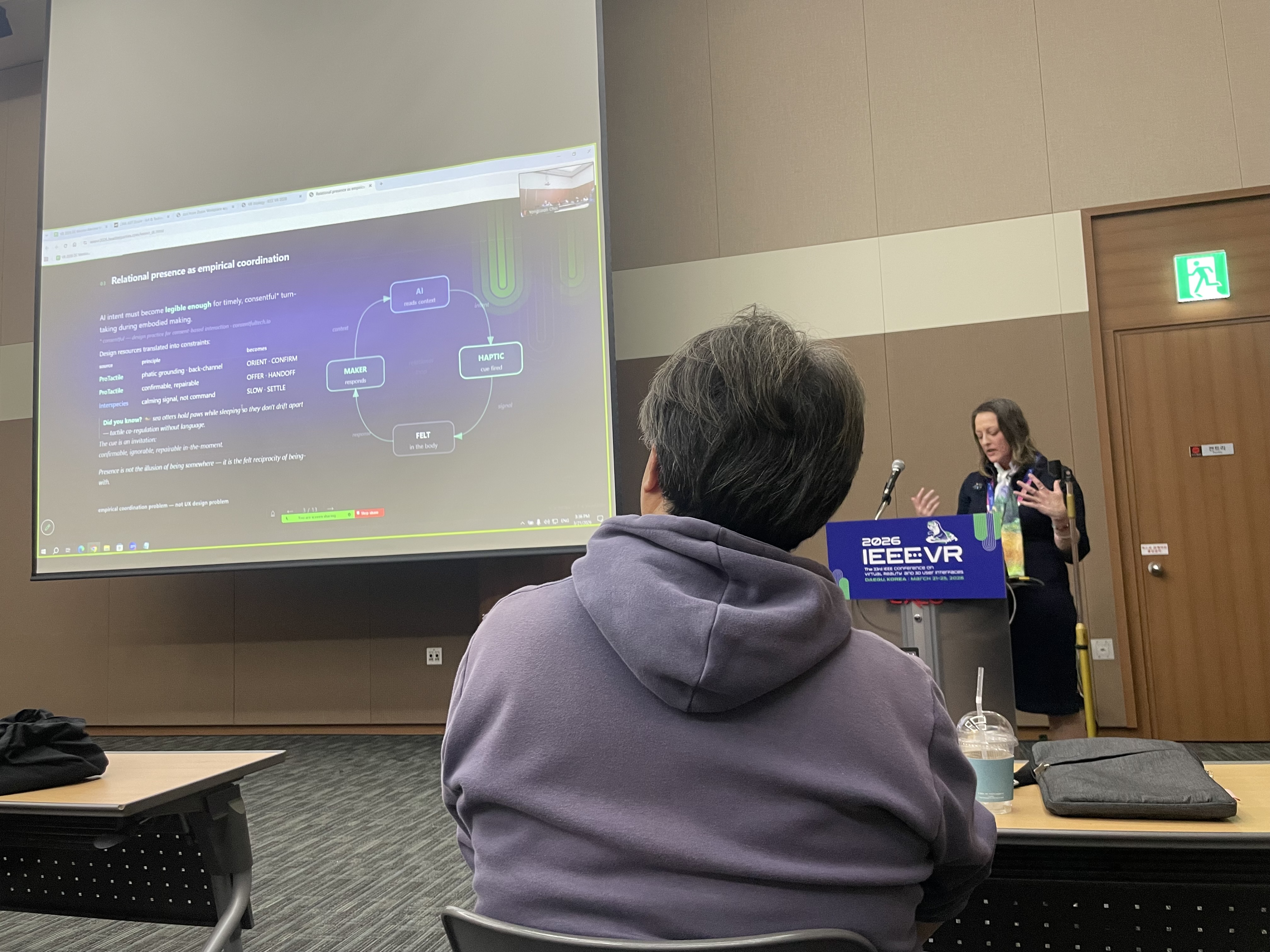

How can an AI partner stay legible and negotiable when the primary interface is the body — not the text box?

VR Ecology is a research-creation project exploring relational haptic interaction, kinaesthetic creativity, and adaptive AI attunement within extended reality.

A system that runs on the body's logic

The system integrates gesture, sound, spatial modulation, and intensity-based feedback to support interability-driven co-creation in immersive environments.

What is VR Ecology?

VR Ecology is a dissertation research-creation project at York University. It asks what AI collaboration looks like when the primary interface is the body — not the text box. Generative AI keeps pulling creative practice toward language: stop moving, stop listening, start explaining. XR runs on a different logic. You lean, reach, hesitate, feel a pull. That somatic logic deserves an AI that can operate within it.

The system uses a small, learnable haptic vocabulary — drawn from consentful design principles, ProTactile phatic grounding, and interspecies coordination research — to let an AI partner signal intent, offer turns, and regulate pace through the body. No text prompts. No interruption. The cue is an invitation: confirmable, ignorable, repairable in the moment.

50+ live sessions captured. The open questions I'm bringing to the DC are products of that accumulated work — not its starting point.

* Consentful — design practice for consent-based interaction; not a checkbox but an ongoing, renegotiable relationship. See consentfultech.io

The "engineering backbone" cast of characters

Not animals (yet) — more like tiny helpful gremlins that keep the scene alive.

(•‿•)ノ *tap* "i translate touch"

(ง'̀-'́)ง ♫ "i turn motion into music"

⟡⟡⟡ "i rearrange the light"

(˘▾˘)~ "i follow the shimmer"

(•ᴗ•) "we adjust together"

(o_o)📝 "i remember politely"

A small, learnable haptic language

Six signals. Three coordination functions. Runs on full-body add-on haptics — 9 devices, 40+ motor points of contact. bHaptics 🇰🇷 (IEEEVR 2026 sponsor) confirmed working end-to-end.

| function | signal | means |

|---|---|---|

| ORIENT | bilateral shoulder tap · 200ms | "look here" |

| OFFER | soft double-pulse · ~500ms | "may I?" |

| HANDOFF | firm bilateral · 500ms sustained | "your turn" |

| CONFIRM | quick bilateral tap · 100ms | "got it" |

| SLOW | sustained low bilateral · 800ms | "ease the pace" |

| SETTLE | descending intensity · 1s | "rest here" |

The AI taps — the maker moves — that movement returns as context for the next move.

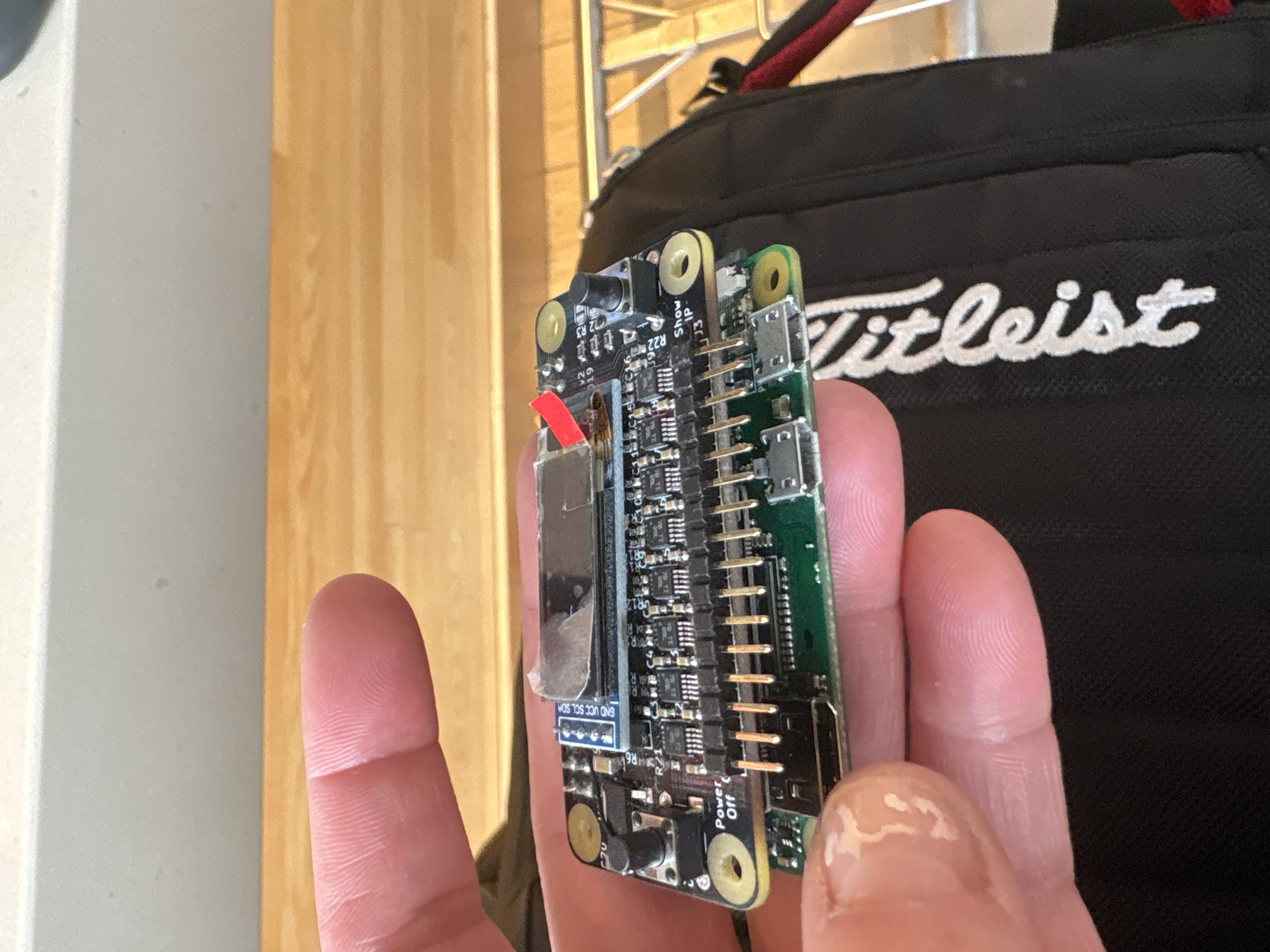

On-Body Haptics — open-source

The custom haptic hardware developed for this research is fully open-sourced — multiple variants, step-by-step build guides, written for students, artists, and budget-conscious labs.

v1 · Arduino belt

5 motors · Bluetooth · ~$40 in parts · beginner-friendly

v2 · Raspberry Pi

8 motors per device · I2C · DRV2605L · 120+ waveform effects · custom PCBs

Schematics, code, wiring diagrams, and build guides all documented. v3 in progress — contributions welcome. If you need haptics and can't afford a commercial kit: please use it, fork it, contribute back.

↗ misscrispencakes.github.io/On-body-haptics/ ↗ bhaptics-http — HTTP REST bridge for bHaptics · pip install bhaptics-httpThe questions arrive late, not early

The open questions I'm bringing to the DC are products of accumulated work —

Three open questions for the DC

Where the accumulated work has run into genuine open problems —

System walkthrough video

Teaser and walkthrough coming soon — drop back after the conference.

Contact

S.C. Vollmer · York University · The Alice Lab

Contact: TBA